Bag All You Need:

Learning a Generalizable Bagging Strategy for Heterogeneous Objects

IROS 2023

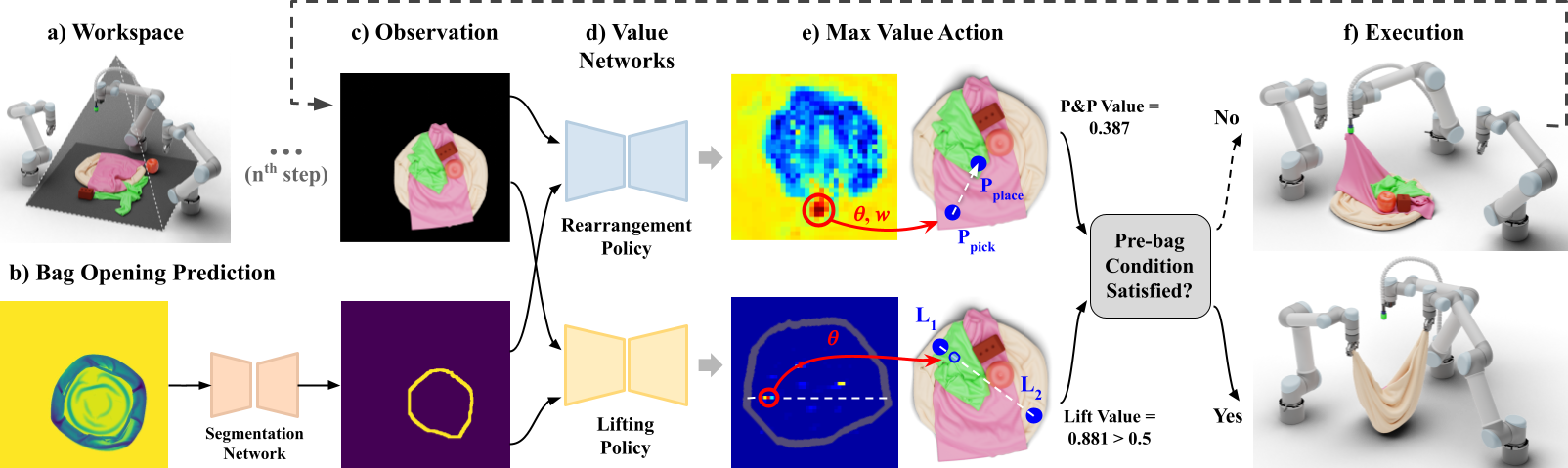

We introduce a practical robotics solution for the task of heterogeneous bagging, requiring the placement of multiple rigid and deformable objects into a deformable bag. This is a difficult task as it features complex interactions between multiple highly deformable objects under limited observability. To tackle these challenges, we propose a robotic system consisting of two learned policies: a rearrangement policy that learns to place multiple rigid objects and fold deformable objects in order to achieve desirable pre-bagging conditions, and a lifting policy to infer suitable grasp points for bi-manual bag lifting. We evaluate these learned policies on a real-world three- arm robot platform that achieves a 70% heterogeneous bagging success rate with novel objects. To facilitate future research and comparison, we also develop a novel heterogeneous bagging simulation benchmark that will be made publicly available.

Object Rearrangement

Bag Lift

Paper & Code

Latest Paper Version: ArXiv. Code and instructions to download data coming soon.

Team

* indicates equal contribution

1 Columbia University

2 Toyota Research Institute

Technical Summary Video (with audio)

System and Task Setup

Our system consists of (a) three robot arms and a top camera with a view of the workspace. A top-down depth image of the bag (before placing any other objects) is used to (b) predict the bag opening boundary. For each step, (c) a top-down RGB image and the predicted bag opening mask are input to (d) the rearrangement and lifting policies, which individually output (e) dense value maps and the action corresponding to the highest pick-and-place and lift score. If the pre-bagging condition is satisfied, the bag is (f) lifted from the lift points predicted by the lifting network. Otherwise, we (f) execute a rearrangement action and return to (c) for the next step.

Real World Results

Two Objects

Heuristics

Ours

Three Objects

Heuristic

Ours

Four Objects

Heuristic

Ours

Five Objects

Heuristic

Ours

Interesting Cases

The following two examples demonstrate how heuristically placing all objects at the center of the bag can lead to failure. While our rearrangement policy learns to arrange each object relative to other objects inside the bag opening, the heuristic method stacks the strawberry soft toy on top of the orange cup, causing both objects to fall away.

Heuristic

Ours

In this second video, the apple being placed on top of the pile of clothes results in it rolling outside the workspace (heuristic).

Heuristic

Ours

The following example shows that even when the heuristic rearrangement policy is able to achieve a desirable pre-bagging configuration, learning from where to lift the bag is crucial.

Heuristic

Ours

Failure Analysis

The most common failure case was during bag grasping; for instance, the robot would inadvertently grasp a cloth close to one of the predicted lift points and lift the cloth instead of the bag. Another observed failure mode is suboptimal object rearrangement; for instance, a rigid object placed upon a pile of cloths is generally unstable.

Bag grasping failure

Suboptimal rearrangement failure

Contact

If you have any questions, please feel free to contact Arpit Bahety or Shreeya Jain.